注意:MMM已经被PXC替代。

MMM 官方不再支持了。

**NOTE: By now there are a some good alternatives to MySQL-MMM. Maybe you want to check out [[http://codership.com/content/using-galera-cluster|Galera Cluster]] which is part of [[https://downloads.mariadb.org/mariadb-galera/|MariaDB Galera Cluster]] and [[http://www.percona.com/software/percona-xtradb-cluster|Percona XtraDB Cluster]].

1, mmm简介

MMM(Master-Masterreplication manager for MySQL)是一套支持双主故障切换和双主日常管理的脚本程序。MMM使用Perl语言开发,主要用来监控和管理MySQL Master-Master(双主)复制,虽然叫做双主复制,但是业务上同一时刻只允许对一个主进行写入,另一台备选主上提供部分读服务,以加速在主主切换时刻备选主的预热,可以说MMM这套脚本程序一方面实现了故障切换的功能,另一方面其内部附加的工具脚本也可以实现多个slave的read负载均衡。

MMM提供了自动和手动两种方式移除一组服务器中复制 延迟较高的服务器的虚拟ip,同时它还可以备份数据,实现两节点之间的数据同步等。由于MMM无法完全的保证数据一致性,所以MMM适用于对数据的一致性要求不是很高,但是又想最大程度的保证业务可用性的场景。对于那些对数据的一致性要求很高的业务,非常不建议采用MMM这种高可用架构。

如下图所示:

2,准备好3台mysql服务器

2.1 服务器准备

可以用虚拟机来安装mysql,因为以前做mha实验的时候,已经安装好了mysql,所以这个可以直接在现在已经有的3台mysql上面来部署mmm软件。

|

Package name |

Description |

|

mysql-mmm-agent |

MySQL-MMM Agent |

|

mysql-mmm-monitor |

MySQL-MMM Monitor |

|

mysql-mmm-tools |

MySQL-MMM Tools |

Mysql主从搭建: http://blog.csdn.net/mchdba/article/details/44734597

2.2 配置数据库配置

db1(192.168.52.129): vim/etc/my.cnf

server-id=129

log_slave_updates = 1

auto-increment-increment = 2 #每次增长2

auto-increment-offset = 1 #设置自动增长的字段的偏移量,即初始值为1

db2(192.168.52.129): vim/etc/my.cnf

server-id=230

log_slave_updates = 1

auto-increment-increment = 2 #每次增长2

auto-increment-offset = 2 #设置自动增长的字段的偏移量,即初始值为1

db3(192.168.52.128): vim/etc/my.cnf

server-id=331

log_slave_updates = 1

3,安装mmm

3.1 下载mmm

下载地址: http://mysql-mmm.org/downloads,最新版本是2.2.1,如下所示:

使用wget方式下载:wget http://mysql-mmm.org/_media/:mmm2:mysql-mmm-2.2.1.tar.gz

3.2 准备好perl以及lib包环境

yum install -yperl-*

yum install -y libart_lgpl.x86_64

yum install -y mysql-mmm.noarch fail

yum install -y rrdtool.x86_64

yum install -y rrdtool-perl.x86_64

可以可以直接运行install_mmm.sh脚本来安装

3.3 开始安装mmm

按照官网标准配置,mmm是需要安装在一台单独服务器上面,但是这里实验为了节省资源,所以就可以将mmm安装部署在一台slave上面,部署在192.168.52.131上面。

mv :mmm2:mysql-mmm-2.2.1.tar.gzmysql-mmm-2.2.1.tar.gz

tar -xvf mysql-mmm-2.2.1.tar.gz

cd mysql-mmm-2.2.1

make

make install

mmm安装后的拓扑结构如下:

目录 介绍

/usr/lib64/perl5/vendor_perl/ MMM使用的主要perl模块

/usr/lib/mysql-mmm MMM使用的主要脚本

/usr/sbin MMM使用的主要命令的路径

/etc/init.d/ MMM的agent和monitor启动服务的目录

/etc/mysql-mmm MMM配置文件的路径,默认所以的配置文件位于该目录下

/var/log/mysql-mmm 默认的MMM保存日志的位置

到这里已经完成了MMM的基本需求,接下来需要配置具体的配置文件,其中mmm_common.conf,mmm_agent.conf为agent端的配置文件,mmm_mon.conf为monitor端的配置文件。

将mmm_common.conf复制到另外db1、db2上面(因为db3和monitor是一台,所以不用复制了)。

4,配置mysql库数据节点的mmm配置

4.1在db3上配置mmm_common.conf

需要在db1、db2、db3上分配配置agent端配置文件,我这里在db3上安装的mmm,所以直接在db3上编辑操作:

vim/etc/mysql-mmm/mmm_common.conf

[root@oraclem1 ~]# cat /etc/mysql-mmm/mmm_common.conf

active_master_role writer

cluster_interface eth0

pid_path /var/run/mmm_agentd.pid

bin_path /usr/lib/mysql-mmm/

replication_user repl

replication_password repl_1234

agent_user mmm_agent

agent_password mmm_agent_1234

ip 192.168.52.129

mode master

peer db2

ip 192.168.52.128

mode master

peer db1

ip 192.168.52.131

mode slave

hosts db1, db2

ips 192.168.52.120

mode exclusive

hosts db1, db2, db3

ips 192.168.52.129, 192.168.52.128, 192.168.52.131

mode balanced

[root@oraclem1 ~]#

其中 replication_user 用于检查复制的用户, agent_user 为agent的用户, mode 标明是否为主或者备选主,或者从库。 mode exclusive 主为独占模式,同一时刻只能有一个主,

由于db2和db3两台主机也要配置agent配置文件,我们直接把mmm_common.conf从db1拷贝到db2和db3两台主机的/etc/mysql-mmm下。

4.2 将mmm_common.conf复制到db1和db2上面

scp /etc/mysql-mmm/mmm_common.conf data01:/etc/mysql-mmm/mmm_common.conf

scp /etc/mysql-mmm/mmm_common.conf data02:/etc/mysql-mmm/mmm_common.conf

4.3 配置mmm_agent.conf文件

在db1、db2、db3上面配置agent

db1(192.168.52.129):

[root@data01 mysql-mmm]# cat/etc/mysql-mmm/mmm_agent.conf

include mmm_common.conf

this db1

[root@data01 mysql-mmm]#

db2(192.168.52.128):

[root@data02 mysql-mmm-2.2.1]# vim/etc/mysql-mmm/mmm_agent.conf

include mmm_common.conf

this db2

db3(192.168.52.131):

[root@oraclem1 vendor_perl]# cat /etc/mysql-mmm/mmm_agent.conf

include mmm_common.conf

this db3

[root@oraclem1 vendor_perl]#

4.4 配置monitor

[root@oraclem1 vendor_perl]# cat /etc/mysql-mmm/mmm_mon.conf

include mmm_common.conf

ip 127.0.0.1

pid_path /var/run/mmm_mond.pid

bin_path /usr/lib/mysql-mmm/

status_path /var/lib/misc/mmm_mond.status

ping_ips 192.168.52.129, 192.168.52.128, 192.168.52.131

monitor_user mmm_monitor

monitor_password mmm_monitor_1234

debug 0

[root@oraclem1 vendor_perl]#

这里只在原有配置文件中的ping_ips添加了整个架构被监控主机的ip地址,而在

4.5 创建监控用户

|

用户名 |

描述 |

权限 |

|

Monitor user |

mmm的monitor端监控所有的mysql数据库的状态用户 |

REPLICATION CLIENT |

|

Agent user |

主要是MMM客户端用于改变的master的read_only状态用 |

SUPER,REPLICATION CLIENT,PROCESS |

|

repl复制账号 |

用于复制的用户 |

REPLICATION SLAVE |

在3台服务器(db1,db2,db3)进行授权,因为我之前做mha实验的时候mysql已经安装好了,而且复制账号repl也已经建立好了,repl账号语句:GRANT REPLICATION SLAVE ON*.* TO 'repl'@'192.168.52.%' IDENTIFIED BY 'repl_1234';monitor用户:GRANTREPLICATION CLIENT ON *.* TO 'mmm_monitor'@'192.168.0.%' IDENTIFIED BY'mmm_monitor_1234'; agent用户:GRANT SUPER, REPLICATION CLIENT, PROCESS ON *.* TO'mmm_agent'@'192.168.0.%' IDENTIFIED BY'mmm_agent_1234';如下所示:

mysql> GRANT REPLICATION CLIENT ON *.* TO 'mmm_monitor'@'192.168.52.%' IDENTIFIED BY 'mmm_monitor_1234';

Query OK, 0 rows affected (0.26 sec)

mysql> GRANT SUPER, REPLICATION CLIENT, PROCESS ON *.* TO 'mmm_agent'@'192.168.52.%' IDENTIFIED BY 'mmm_agent_1234';

Query OK, 0 rows affected (0.02 sec)

mysql>

如果是从头到尾从新搭建,则加上另外一个复制账户repl的grant语句(分别在3台服务器都需要执行这3条SQL):GRANT REPLICATION SLAVE ON *.* TO 'repl'@'192.168.52.%' IDENTIFIEDBY '123456'; 如下所示:

mysql> GRANT REPLICATION SLAVE ON *.* TO'repl'@'192.168.52.%' IDENTIFIED BY '123456';

Query OK, 0 rows affected (0.01 sec)

mysql>

5,搭建起db1、db2、db3之间的mms服务

db1、db2、db3已经安装好了,现在只需要搭建起mms的架构就可以了。

db1(192.168.52.129)-->db2(192.168.52.128)

db1(192.168.52.129)-->db3(192.168.52.131)

db2(192.168.52.128)—> db1(192.168.52.129)

5.1建立db1到db2、db3的复制

先去查看db1(192.168.52.129)上面的master状况:

mysql> show master status;

+------------------+----------+--------------+--------------------------------------------------+-------------------+

| File | Position | Binlog_Do_DB |Binlog_Ignore_DB | Executed_Gtid_Set |

+------------------+----------+--------------+--------------------------------------------------+-------------------+

| mysql-bin.000208 | 1248 | user_db |mysql,test,information_schema,performance_schema | |

+------------------+----------+--------------+--------------------------------------------------+-------------------+

1 row in set (0.03 sec)

mysql>

然后在db2和db3上建立复制链接,步骤如下:

STOP SLAVE;

RESET SLAVE;

CHANGE MASTER TOMASTER_HOST='192.168.52.129',MASTER_USER='repl',MASTER_PASSWORD='repl_1234',MASTER_LOG_FILE='mysql-bin.000208',MASTER_LOG_POS=1248;

START SLAVE;

SHOW SLAVE STATUS\G;

检查到双Yes和0就OK了。

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Seconds_Behind_Master: 0

5.2 再建立db2到db1的复制

查看db2(192.168.52.128)的master状况,然后建立复制链接

mysql> show master status;

+------------------+----------+--------------+--------------------------------------------------+-------------------+

| File | Position | Binlog_Do_DB |Binlog_Ignore_DB | Executed_Gtid_Set |

+------------------+----------+--------------+--------------------------------------------------+-------------------+

| mysql-bin.000066 | 1477 | user_db |mysql,test,information_schema,performance_schema | |

+------------------+----------+--------------+--------------------------------------------------+-------------------+

1 row in set (0.04 sec)

mysql>

然后建立db2到db1的复制链接:

STOP SLAVE;

CHANGE MASTER TO MASTER_HOST='192.168.52.128',MASTER_USER='repl',MASTER_PASSWORD='repl_1234',MASTER_LOG_FILE='mysql-bin.000066',MASTER_LOG_POS=1477;

START SLAVE;

SHOW SLAVE STATUS\G;

检查到双Yes和0就OK了。

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Seconds_Behind_Master: 0

6,启动agent服务

分别在db1、db2、db3上启动agent服务

[root@data01 mysql-mmm]#/etc/init.d/mysql-mmm-agent start

Daemon bin: '/usr/sbin/mmm_agentd'

Daemon pid: '/var/run/mmm_agentd.pid'

Starting MMM Agent daemon... Ok

[root@data01 mysql-mmm]#

7,启动monitor服务

在monit服务器192.168.52.131上启动monitor服务:

添加进系统后台:chkconfig --add /etc/rc.d/init.d/mysql-mmm-monitor

开始启动:service mysql-mmm-monitor start

[root@oraclem1 ~]# servicemysql-mmm-monitor start

Daemon bin: '/usr/sbin/mmm_mond'

Daemon pid: '/var/run/mmm_mond.pid'

Starting MMM Monitor daemon: Ok

[root@oraclem1 ~]#

8,一些管理操作

查看状态:

[root@oraclem1 mysql-mmm]# mmm_control show

db1(192.168.52.129) master/AWAITING_RECOVERY. Roles:

db2(192.168.52.128) master/AWAITING_RECOVERY. Roles:

db3(192.168.52.131) slave/AWAITING_RECOVERY. Roles:

[root@oraclem1 mysql-mmm]#

8.1 设置online:

[root@oraclem1 mysql-mmm]# mmm_control show

db1(192.168.52.129) master/AWAITING_RECOVERY. Roles:

db2(192.168.52.128) master/AWAITING_RECOVERY. Roles:

db3(192.168.52.131) slave/AWAITING_RECOVERY. Roles:

[root@oraclem1 mysql-mmm]# mmm_controlset_online db1

OK: State of 'db1' changed to ONLINE. Nowyou can wait some time and check its new roles!

[root@oraclem1 mysql-mmm]# mmm_controlset_online db2

OK: State of 'db2' changed to ONLINE. Nowyou can wait some time and check its new roles!

[root@oraclem1 mysql-mmm]# mmm_controlset_online db3

OK: State of 'db3' changed to ONLINE. Nowyou can wait some time and check its new roles!

[root@oraclem1 mysql-mmm]#

8.2 check所有db

[root@oraclem1 mysql-mmm]# mmm_controlchecks

db2 ping [last change:2015/04/14 00:10:57] OK

db2 mysql [last change:2015/04/14 00:10:57] OK

db2 rep_threads [last change:2015/04/14 00:10:57] OK

db2 rep_backlog [last change:2015/04/14 00:10:57] OK: Backlog is null

db3 ping [last change:2015/04/14 00:10:57] OK

db3 mysql [last change:2015/04/14 00:10:57] OK

db3 rep_threads [last change:2015/04/14 00:10:57] OK

db3 rep_backlog [last change:2015/04/14 00:10:57] OK: Backlog is null

db1 ping [last change:2015/04/14 00:10:57] OK

db1 mysql [last change:2015/04/14 00:10:57] OK

db1 rep_threads [last change:2015/04/14 00:10:57] OK

db1 rep_backlog [last change: 2015/04/1400:10:57] OK: Backlog is null

[root@oraclem1 mysql-mmm]#

8.3 查看mmm_control日志

[root@oraclem1 mysql-mmm]# tail -f/var/log/mysql-mmm/mmm_mond.log

2015/04/14 00:55:29 FATAL Admin changedstate of 'db1' from AWAITING_RECOVERY to ONLINE

2015/04/14 00:55:29 INFO Orphaned role 'writer(192.168.52.120)'has been assigned to 'db1'

2015/04/14 00:55:29 INFO Orphaned role 'reader(192.168.52.131)'has been assigned to 'db1'

2015/04/14 00:55:29 INFO Orphaned role 'reader(192.168.52.129)'has been assigned to 'db1'

2015/04/14 00:55:29 INFO Orphaned role 'reader(192.168.52.128)'has been assigned to 'db1'

2015/04/14 00:58:15 FATAL Admin changedstate of 'db2' from AWAITING_RECOVERY to ONLINE

2015/04/14 00:58:15 INFO Moving role 'reader(192.168.52.131)'from host 'db1' to host 'db2'

2015/04/14 00:58:15 INFO Moving role 'reader(192.168.52.129)'from host 'db1' to host 'db2'

2015/04/14 00:58:18 FATAL Admin changedstate of 'db3' from AWAITING_RECOVERY to ONLINE

2015/04/14 00:58:18 INFO Moving role 'reader(192.168.52.131)'from host 'db2' to host 'db3'

8.4 mmm_control的命令

[root@oraclem1 ~]# mmm_control --help

Invalid command '--help'

Valid commands are:

help - show this message

ping - ping monitor

show - show status

checks [|all [ |all]] - show checks status

set_online- set host online

set_offline- set host offline

mode - print current mode.

set_active - switch into active mode.

set_manual - switch into manual mode.

set_passive - switch into passive mode.

move_role [--force]- move exclusive role to host

(Only use --force if you know what you are doing!)

set_ip- set role with ip to host

[root@oraclem1 ~]#

9,测试切换操作

9.1 mmm切换原理

slave agent收到new master发送的set_active_master信息后如何进行切换主库

源码 Agent\Helpers\Actions.pm

set_active_master($new_master)

Try to catch up with the old master as faras possible and change the master to the new host.

(Syncs to the master log if the old masteris reachable. Otherwise syncs to the relay log.)

(1)、获取同步的binlog和position

先获取new master的show slavestatus中的 Master_Log_File、Read_Master_Log_Pos

wait_log=Master_Log_File, wait_pos=Read_Master_Log_Pos

如果old master可以连接,再获取oldmaster的show master status中的File、Position

wait_log=File, wait_pos=Position # 覆盖new master的slave信息,以old master 为准

(2)、slave追赶old master的同步位置

SELECT MASTER_POS_WAIT('wait_log', wait_pos);

#停止同步

STOP SLAVE;

(3)、设置new master信息

#此时 new master已经对外提供写操作。

#(在main线程里new master先接收到激活的消息,new master 转换(包含vip操作)完成后,然后由_distribute_roles将master变动同步到slave上)

#在new master转换完成后,如果能执行flush logs,更方便管理

#获取new master的binlog数据。

SHOW MASTER STATUS;

#从配置文件/etc/mysql-mmm/mmm_common.conf 读取同步帐户、密码

replication_user、replication_password

#设置新的同步位置

CHANGE MASTER TO MASTER_HOST='$new_peer_host',MASTER_PORT=$new_peer_port

,MASTER_USER='$repl_user', MASTER_PASSWORD='$repl_password'

,MASTER_LOG_FILE='$master_log', MASTER_LOG_POS=$master_pos;

#开启同步

START SLAVE;

9.2 停止db1,看write是否会自动切换到db2

(1)在db1上执行service mysqlstop;

[root@data01 ~]# service mysql stop;

Shutting down MySQL..... SUCCESS!

[root@data01 ~]#

(2)在monitor用mmm_control查看状态

[root@oraclem1 ~]# mmm_control show

db1(192.168.52.129) master/HARD_OFFLINE. Roles:

db2(192.168.52.128) master/ONLINE. Roles: reader(192.168.52.129),writer(192.168.52.120)

db3(192.168.52.131) slave/ONLINE. Roles: reader(192.168.52.128),reader(192.168.52.131)

[root@oraclem1 ~]#

Writer 已经变成了 db2 了。

(3)在monitor上查看后台日志,可以看到如下描述

[root@oraclem1 mysql-mmm]# tail -f /var/log/mysql-mmm/mmm_mond.log

......

2015/04/14 01:34:11 WARN Check 'rep_backlog' on 'db1' is in unknown state! Message: UNKNOWN: Connect error (host = 192.168.52.129:3306, user = mmm_monitor)! Lost connection to MySQL server at 'reading initial communication packet', system error: 111

2015/04/14 01:34:11 WARN Check 'rep_threads' on 'db1' is in unknown state! Message: UNKNOWN: Connect error (host = 192.168.52.129:3306, user = mmm_monitor)! Lost connection to MySQL server at 'reading initial communication packet', system error: 111

2015/04/14 01:34:21 ERROR Check 'mysql' on 'db1' has failed for 10 seconds! Message: ERROR: Connect error (host = 192.168.52.129:3306, user = mmm_monitor)! Lost connection to MySQL server at 'reading initial communication packet', system error: 111

2015/04/14 01:34:23 FATAL State of host 'db1' changed from ONLINE to HARD_OFFLINE (ping: OK, mysql: not OK)

2015/04/14 01:34:23 INFO Removing all roles from host 'db1':

2015/04/14 01:34:23 INFO Removed role 'reader(192.168.52.128)' from host 'db1'

2015/04/14 01:34:23 INFO Removed role 'writer(192.168.52.120)' from host 'db1'

2015/04/14 01:34:23 INFO Orphaned role 'writer(192.168.52.120)' has been assigned to 'db2'

2015/04/14 01:34:23 INFO Orphaned role 'reader(192.168.52.128)' has been assigned to 'db3'

9.3 停止db2,看writer自动切换到db1

(1)启动db1,并设置为online

[root@data01 ~]# service mysql start

Starting MySQL.................. SUCCESS!

[root@data01 ~]#

在monitor上设置db1为online

[root@oraclem1 ~]# mmm_control set_onlinedb1;

OK: State of 'db1' changed to ONLINE. Nowyou can wait some time and check its new roles!

[root@oraclem1 ~]#

在monitor上查看状态

[root@oraclem1 ~]# mmm_control show

db1(192.168.52.129) master/ONLINE. Roles: reader(192.168.52.131)

db2(192.168.52.128) master/ONLINE. Roles: reader(192.168.52.129),writer(192.168.52.120)

db3(192.168.52.131) slave/ONLINE. Roles: reader(192.168.52.128)

[root@oraclem1 ~]#

OK ,这里要启动 db1 ,并且将 db1 设置成 online ,是因为 mmm 的配置里面 master 只能在 db1 和 db2 之间切换,在自动切换成功的情况下,必须保证要切换的对象 master 是 online 的,不然切换就会失败因为切换对象没有 online 。

(2)停止db2

[root@data02 ~]# service mysql stop

Shutting down MySQL.. SUCCESS!

[root@data02 ~]#

(3)在monitor上查看master是否自动从db2切换到db1了

[root@oraclem1 ~]# mmm_control show

db1(192.168.52.129) master/ONLINE. Roles: reader(192.168.52.131),writer(192.168.52.120)

db2(192.168.52.128) master/HARD_OFFLINE. Roles:

db3(192.168.52.131)slave/ONLINE. Roles: reader(192.168.52.129), reader(192.168.52.128)

[root@oraclem1 ~]#

OK , writer 已经自动变成 db1 了, db2 处于 HARD_OFFLINE 状态,自动切换成功了。

(4)去查看monitor后台日志

[root@oraclem1 mysql-mmm]# tail -f /var/log/mysql-mmm/mmm_mond.log

......

2015/04/14 01:56:13 ERROR Check 'mysql' on 'db2' has failed for 10 seconds! Message: ERROR: Connect error (host = 192.168.52.128:3306, user = mmm_monitor)! Lost connection to MySQL server at 'reading initial communication packet', system error: 111

2015/04/14 01:56:14 FATAL State of host 'db2' changed from ONLINE to HARD_OFFLINE (ping: OK, mysql: not OK)

2015/04/14 01:56:14 INFO Removing all roles from host 'db2':

2015/04/14 01:56:14 INFO Removed role 'reader(192.168.52.129)' from host 'db2'

2015/04/14 01:56:14 INFO Removed role 'writer(192.168.52.120)' from host 'db2'

2015/04/14 01:56:14 INFO Orphaned role 'writer(192.168.52.120)' has been assigned to 'db1'

2015/04/14 01:56:14 INFO Orphaned role 'reader(192.168.52.129)' has been assigned to 'db3'

9.4 验证vip

先获得writer的vip地址,在db2上

[root@oraclem1 ~]# mmm_control show

db1(192.168.52.129) master/ONLINE. Roles: reader(192.168.52.230)

db2(192.168.52.130) master/ONLINE. Roles: reader(192.168.52.231),writer(192.168.52.120)

db3(192.168.52.131) slave/ONLINE. Roles: reader(192.168.52.229)

[root@oraclem1 ~]#

然后去db2上面,查看ip绑定:

[root@data02 mysql-mmm-2.2.1]# ip add

1: lo:

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0:

link/ether 00:0c:29:a7:26:fc brd ff:ff:ff:ff:ff:ff

inet 192.168.52.130/24 brd 192.168.52.255 scope global eth0

inet 192.168.52.231/32 scope global eth0

inet 192.168.52.120/32 scope global eth0

inet6 fe80::20c:29ff:fea7:26fc/64 scope link

valid_lft forever preferred_lft forever

3: pan0:

link/ether 46:27:c6:ef:2b:95 brd ff:ff:ff:ff:ff:ff

[root@data02 mysql-mmm-2.2.1]#

Ping下writer的vip,是可以ping通的。

[root@oraclem1 ~]# ping 192.168.52.120

PING 192.168.52.120 (192.168.52.120) 56(84)bytes of data.

64 bytes from 192.168.52.120: icmp_seq=1ttl=64 time=2.37 ms

64 bytes from 192.168.52.120: icmp_seq=2ttl=64 time=0.288 ms

64 bytes from 192.168.52.120: icmp_seq=3ttl=64 time=0.380 ms

^C

--- 192.168.52.120 ping statistics ---

3 packets transmitted, 3 received, 0%packet loss, time 2717ms

rtt min/avg/max/mdev =0.288/1.015/2.377/0.963 ms

[root@oraclem1 ~]#

9.5 mmm扩展

10,报错记录汇总

10.1 报错1:

[root@data01 mysql-mmm-2.2.1]#/etc/init.d/mysql-mmm-agent start

Daemon bin: '/usr/sbin/mmm_agentd'

Daemon pid: '/var/run/mmm_agentd.pid'

Starting MMM Agent daemon... Can't locateProc/Daemon.pm in @INC (@INC contains: /usr/local/lib64/perl5/usr/local/share/perl5 /usr/lib64/perl5/vendor_perl/usr/share/perl5/vendor_perl /usr/lib64/perl5 /usr/share/perl5 .) at/usr/sbin/mmm_agentd line 7.

BEGIN failed--compilation aborted at/usr/sbin/mmm_agentd line 7.

failed

[root@data01 mysql-mmm-2.2.1]#

解决办法:cpan安装2个插件

cpan Proc::Daemon

cpan Log::Log4perl

10.2 报错2:

[root@oraclem1 vendor_perl]#/etc/init.d/mysql-mmm-agent start

Daemon bin: '/usr/sbin/mmm_agentd'

Daemon pid: '/var/run/mmm_agentd.pid'

Starting MMM Agent daemon... Can't locateProc/Daemon.pm in @INC (@INC contains: /usr/local/lib64/perl5/usr/local/share/perl5 /usr/lib64/perl5/vendor_perl/usr/share/perl5/vendor_perl /usr/lib64/perl5 /usr/share/perl5 .) at/usr/sbin/mmm_agentd line 7.

BEGIN failed--compilation aborted at/usr/sbin/mmm_agentd line 7.

failed

[root@oraclem1 vendor_perl]#

# Failed test 'the 'pid2.file' has right permissions via file_umask'

# at /root/.cpan/build/Proc-Daemon-0.19-zoMArm/t/02_testmodule.t line 152.

/root/.cpan/build/Proc-Daemon-0.19-zoMArm/t/02_testmodule.tdid not return a true value at t/03_taintmode.t line 20.

# Looks like you failed 1 test of 19.

# Looks like your test exited with 2 justafter 19.

t/03_taintmode.t ... Dubious, test returned2 (wstat 512, 0x200)

Failed 1/19 subtests

解决方式:强行 -f 安装

[root@oraclem1 ~]# cpan Proc::Daemon -f

CPAN: Storable loaded ok (v2.20)

Going to read '/root/.cpan/Metadata'

Database was generated on Mon, 13 Apr 2015 11:29:02 GMT

Proc::Daemon is up to date (0.19).

Warning: Cannot install -f, don't know whatit is.

Try the command

i/-f/

to find objects with matching identifiers.

CPAN: Time::HiRes loaded ok (v1.9726)

[root@oraclem1 ~]#

10.3 报错3:

[root@oraclem1 mysql-mmm]# ping192.168.52.120

PING 192.168.52.120 (192.168.52.120) 56(84)bytes of data.

From 192.168.52.131 icmp_seq=2 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=3 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=4 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=6 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=7 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=8 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=10 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=11 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=12 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=14 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=15 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=16 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=17 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=18 DestinationHost Unreachable

From 192.168.52.131 icmp_seq=19 DestinationHost Unreachable

^C

--- 192.168.52.120 ping statistics ---

20 packets transmitted, 0 received, +15errors, 100% packet loss, time 19729ms

pipe 4

[root@oraclem1 mysql-mmm]#

查看后台 db1 的 agent.log 日志报错如下:

2015/04/20 02:29:41 FATAL Couldn'tconfigure IP '192.168.52.131' on interface 'eth0': undef

2015/04/20 02:29:42 FATAL Couldn't allowwrites: undef

2015/04/20 02:29:44 FATAL Couldn'tconfigure IP '192.168.52.131' on interface 'eth0': undef

2015/04/20 02:29:45 FATAL Couldn't allowwrites: undef

再查看网络端口:

[root@oraclem1 ~]# ip add

1: lo:

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0:

link/ether 00:0c:29:0a:79:e6 brd ff:ff:ff:ff:ff:ff

inet 192.168.52.131/24 brd 192.168.52.255 scope global eth0

inet6 fe80::20c:29ff:fe0a:79e6/64 scope link

valid_lft forever preferred_lft forever

3: pan0:

link/ether 5e:eb:c1:a4:f8:f8 brd ff:ff:ff:ff:ff:ff

[root@oraclem1 ~]#

再用 configure_ip 来检查 eth0 端口的网络情况:

[root@oraclem1 ~]#/usr/lib/mysql-mmm/agent/configure_ip eth0 192.168.52.120

Can't locate Net/ARP.pm in @INC (@INCcontains: /usr/local/lib64/perl5 /usr/local/share/perl5/usr/lib64/perl5/vendor_perl /usr/share/perl5/vendor_perl /usr/lib64/perl5/usr/share/perl5 .) at/usr/share/perl5/vendor_perl/MMM/Agent/Helpers/Network.pm line 11.

BEGIN failed--compilation aborted at/usr/share/perl5/vendor_perl/MMM/Agent/Helpers/Network.pm line 11.

Compilation failed in require at/usr/share/perl5/vendor_perl/MMM/Agent/Helpers/Actions.pm line 5.

BEGIN failed--compilation aborted at/usr/share/perl5/vendor_perl/MMM/Agent/Helpers/Actions.pm line 5.

Compilation failed in require at /usr/lib/mysql-mmm/agent/configure_ipline 6.

BEGIN failed--compilation aborted at/usr/lib/mysql-mmm/agent/configure_ip line 6.

[root@oraclem1 ~]#

然后安装 Net/ARP 之后,就正常了。 cpan Net::ARP

参考资料: http://blog.csdn.net/mchdba/article/details/8633840

参考资料: http://mysql-mmm.org/downloads

参考资料: http://www.open-open.com/lib/view/open1395887766467.html

MySQL高可用之MMM--双主复制

一、MMM简介

1. 概述

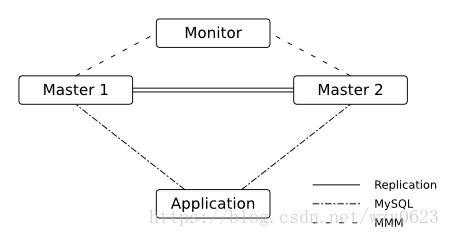

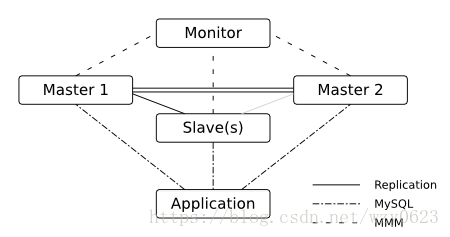

MMM(Master-Master replication manager for MySQL)是一套支持双主故障切换和双主日常管理的脚本程序。MMM使用Perl语言开发,主要用来监控和管理MySQL Master-Master(双主)复制,可以说是mysql主主复制管理器。虽然叫做双主复制,但是业务上同一时刻只允许对一个主进行写入,另一台备选主上提供部分读服务,以加速在主主切换时刻备选主的预热,可以说MMM这套脚本程序一方面实现了故障切换的功能,另一方面其内部附加的工具脚本也可以实现多个slave的read负载均衡。MMMM是关于MySQL主主复制配置的监控、故障转移和管理的一套可伸缩的脚本套件(在任何时候只有一个节点可以被写入)。这个套件也能对居于标准的主从配置的任意数量的从服务器进行读负载均衡,所以可以用它在一组居于复制的服务器启动虚拟IP,除此之外,它还有实现数据备份、节点之间重新同步功能的脚本。

MMM提供了自动和手动两种方式移除一组服务器中复制延迟较高的服务器的虚拟IP,同时它还可以备份数据,实现两节点之间的数据同步等。由于MMM无法完全的保证数据一致性,所以MMM适用于对数据的一致性要求不是很高,但是又想最大程度的保证业务可用性的场景。MySQL本身没有提供replication failover的解决方案,通过MMM方案能实现服务器的故障转移,从而实现MySQL的高可用。对于那些对数据的一致性要求很高的业务,不建议采用MMM这种高可用架构。

2. 优缺点

优点:高可用性,扩展性好,出现故障自动切换,对于主主同步,在同一时间只提供一台数据库写操作,保证的数据的一致性。

缺点:Monitor节点是单点,可以结合Keepalived实现高可用。

3. 工作原理

MMM是一套灵活的脚本程序,基于perl实现,用来对mysql replication进行监控和故障迁移,并能管理mysql Master-Master复制的配置(同一时间只有一个节点是可写的)。MMM的主要功能通过以下三个脚本提供:

mmm_mond:监视守护进程,它执行所有监视工作并做出有关角色切换的所有决定等等。此脚本需要在监管机上运行。

mmm_agentd:运行在每个mysql服务器上(Master和Slave)的代理进程,完成监控的探针工作和执行简单的远端服务设置。此脚本需要在被监管机上运行。

mmm_control:一个简单的脚本,提供管理mmm_mond进程的命令。

mysql-mmm的监管端会提供多个虚拟IP(VIP),包括一个可写VIP,多个可读VIP。通过监管的管理,这些IP会绑定在可用MySQL之上,当某一台MySQL宕机时,监管会将VIP迁移至其它MySQL。在整个监管过程中,需要在MySQL中添加相关授权用户,以便让MySQL可以支持监理机的维护。授权的用户包括一个mmm_monitor用户和一个mmm_agent用户,如果想使用mmm的备份工具则还要添加一个mmm_tools用户。

4. 典型用例

(1)双节点设置

双节点架构如图1所示。

图1

在双节点主-主设置中,MMM使用五个IP:每个节点的单个永久IP,两个读取VIP(只读)和1个写入VIP(更新)。最后三个IP在节点之间迁移,具体取决于节点可用性。通常在没有复制延迟时,活动主库有2个VIP(读写),备用主库有1个读VIP(只读)。如果发生故障,读写操作都会迁移到工作节点。

(2)双主+一个/多个从

这种架构如图2所示。

图2

5. 系统需求

对于使用n个MySQL服务器的MMM设置,有以下需求:

n + 1 个主机:每个MySQL服务器一个主机; MMM监视器的一个主机。

2 * n + 1 个IP地址:每个主机一个固定IP + 读角色一个VIP,一个写入VIP。

monitor user:具有用于MMM监视器的REPLICATION CLIENT特权的MySQL用户。

agent user:具有SUPER,REPLICATION CLIENT,MMM代理进程权限的MySQL用户。

replication user:具有REPLICATION SLAVE权限的MySQL用户,用于复制。

tools user:具有SUPER,REPLICATION CLIENT,RELOAD for MMM工具权限的MySQL用户。

监控主机需要安装以下支持包:

(1)perl

(2)fping(如果你想以非root用户身份运行mmm_mond)

(3)Perl模块:

Algorithm::Diff

Class:Singleton

DBI and DBD::mysql

File::Basename

File::stat

File::Temp

Log::Dispatch

Log::Log4perl

Mail::Send

Net::Ping

Proc::Daemon

Thread::Queue

Time::HiRes

对于节点主机,初始应该在所有MySQL服务器的配置中设置read_only=1,MMM将使用active_master_role在活动主机上将其更改为read_only=0。主机需要安装以下支持包:

(1)perl

(2)iproute

(3)send_arp (solaris)

(4)Perl模块:

Algorithm::Diff

DBI and DBD::mysql

File::Basename

File::stat

Log::Dispatch

Log::Log4perl

Mail::Send

Net::ARP (linux)

Proc::Daemon

Time::HiRes

如果要使用MMM工具(mmm_backup,mmm_restore,mmm_clone),则必须将LVM用于MySQL数据库和日志所在的分区。注意,需要对回滚段空间进行自由物理扩展,参见“Estimating Undo Space needed for LVM Snapshot”。MMM工具还需要以下perl模块:

Path::Class

Data::Dumper

二、实验设计

1. 基本环境

操作系统版本:CentOS Linux release 7.2.1511 (Core)

MySQL版本:5.6.14

2. 架构设计

实验架构如图3所示。

图3

三、MMM安装配置

1. 配置双主复制

双主复制的详细配置步骤可以参考这篇文章:http://www.cnblogs.com/phpstudy2015-6/p/6485819.html#_label7,这里从略。

2. 安装MMM

在三台主机执行下面的yum命令安装MMM软件包。

yum -y install mysql-mmm-*

3. 建立数据库用户

在DB1、DB2中建立mmm_agent和mmm_monitor用户。

grant super,replication client,process on *.* to 'mmm_agent'@'%' identified by '123456';

grant replication client on *.* to 'mmm_monitor'@'%' identified by '123456';

4. 配置MMM

(1)通用配置

编辑DB1上的/etc/mysql-mmm/mmm_common.conf文件,内容如下:

active_master_role writer

cluster_interface ens32

pid_path /var/run/mmm_agentd.pid

bin_path /usr/libexec/mysql-mmm/

replication_user repl

replication_password 123456

agent_user mmm_agent

agent_password 123456

ip 172.16.1.125

mode master

peer db2

ip 172.16.1.126

mode master

peer db1

hosts db1, db2

ips 172.16.1.100

mode exclusive

hosts db1, db2

ips 172.16.1.210, 172.16.1.211

mode balanced

主要配置项说明:

active_master_role:活动主机角色名称,agent与monitor使用。

replication_user:用于复制的用户。

agent_user:mmm-agent用户。

host段中的mode:标明是否为主或者备选主,或者从库。

role段中的mode:exclusive为独占模式,同一时刻只能有一个主。balanced可能有多个ips,ips将在主机之间平衡。

将该文件复制到其它所有节点(DB2、Monitor)。

scp /etc/mysql-mmm/mmm_common.conf 172.16.1.126:/etc/mysql-mmm/

scp /etc/mysql-mmm/mmm_common.conf 172.16.1.127:/etc/mysql-mmm/

(2)agent配置

DB1的/etc/mysql-mmm/mmm_agent.conf文件内容为:

include mmm_common.conf

this db1

DB2的/etc/mysql-mmm/mmm_agent.conf文件内容为:

include mmm_common.conf

this db2

(3)monitor配置

Monitor上的/etc/mysql-mmm/mmm_mon.conf文件内容为:

include mmm_common.conf

ip 172.16.1.127

pid_path /var/run/mmm_mond.pid

bin_path /usr/libexec/mysql-mmm

status_path /var/lib/mysql-mmm/mmm_mond.status

ping_ips 172.16.1.125,172.16.1.126

auto_set_online 60

monitor_user mmm_monitor

monitor_password 123456

debug 0

auto_set_online表示将节点状态从AWAITING_RECOVERY切换到ONLINE之前等待的秒数,0表示已禁用。

四、功能测试

1. 启动MMM

(1)在DB1、DB2上启动agent

/etc/init.d/mysql-mmm-agent start

/etc/init.d/mysql-mmm-agent start

/etc/init.d/mysql-mmm-agent文件内容分如下:

#!/bin/sh

#

# mysql-mmm-agent This shell script takes care of starting and stopping

# the mmm agent daemon.

#

# chkconfig: - 64 36

# description: MMM Agent.

# processname: mmm_agentd

# config: /etc/mmm_agent.conf

# pidfile: /var/run/mmm_agentd.pid

# Cluster name (it can be empty for default cases)

CLUSTER=''

#-----------------------------------------------------------------------

# Paths

if [ "$CLUSTER" != "" ]; then

MMM_AGENTD_BIN="/usr/sbin/mmm_agentd @$CLUSTER"

MMM_AGENTD_PIDFILE="/var/run/mmm_agentd-$CLUSTER.pid"

else

MMM_AGENTD_BIN="/usr/sbin/mmm_agentd"

MMM_AGENTD_PIDFILE="/var/run/mmm_agentd.pid"

fi

echo "Daemon bin: '$MMM_AGENTD_BIN'"

echo "Daemon pid: '$MMM_AGENTD_PIDFILE'"

#-----------------------------------------------------------------------

# See how we were called.

case "$1" in

start)

# Start daemon.

echo -n "Starting MMM Agent daemon... "

if [ -s $MMM_AGENTD_PIDFILE ] && kill -0 `cat $MMM_AGENTD_PIDFILE` 2> /dev/null; then

echo " already running."

exit 0

fi

$MMM_AGENTD_BIN

if [ "$?" -ne 0 ]; then

echo "failed"

exit 1

fi

echo "Ok"

exit 0

;;

stop)

# Stop daemon.

echo -n "Shutting down MMM Agent daemon"

if [ -s $MMM_AGENTD_PIDFILE ]; then

pid="$(cat $MMM_AGENTD_PIDFILE)"

cnt=0

kill "$pid"

while kill -0 "$pid" 2>/dev/null; do

cnt=`expr "$cnt" + 1`

if [ "$cnt" -gt 15 ]; then

kill -9 "$pid"

break

fi

sleep 2

echo -n "."

done

echo " Ok"

exit 0

fi

echo " not running."

exit 0

;;

status)

echo -n "Checking MMM Agent process:"

if [ ! -s $MMM_AGENTD_PIDFILE ]; then

echo " not running."

exit 3

fi

pid="$(cat $MMM_AGENTD_PIDFILE)"

if ! kill -0 "$pid" 2> /dev/null; then

echo " not running."

exit 1

fi

echo " running."

exit 0

;;

restart|reload)

$0 stop

$0 start

exit $?

;;

*)

echo "Usage: $0 {start|stop|restart|status}"

;;

esac

exit 1

(2)在Monitor上启动监控

/etc/init.d/mysql-mmm-monitor start

/etc/init.d/mysql-mmm-monitor文件内容分如下:

#!/bin/sh

#

# mysql-mmm-monitor This shell script takes care of starting and stopping

# the mmm monitoring daemon.

#

# chkconfig: - 64 36

# description: MMM Monitor.

# processname: mmm_mond

# config: /etc/mmm_mon.conf

# pidfile: /var/run/mmm_mond.pid

# Cluster name (it can be empty for default cases)

CLUSTER=''

#-----------------------------------------------------------------------

# Paths

if [ "$CLUSTER" != "" ]; then

MMM_MOND_BIN="/usr/sbin/mmm_mond @$CLUSTER"

MMM_MOND_PIDFILE="/var/run/mmm_mond-$CLUSTER.pid"

else

MMM_MOND_BIN="/usr/sbin/mmm_mond"

MMM_MOND_PIDFILE="/var/run/mmm_mond.pid"

fi

echo "Daemon bin: '$MMM_MOND_BIN'"

echo "Daemon pid: '$MMM_MOND_PIDFILE'"

#-----------------------------------------------------------------------

# See how we were called.

case "$1" in

start)

# Start daemon.

echo -n "Starting MMM Monitor daemon: "

if [ -s $MMM_MOND_PIDFILE ] && kill -0 `cat $MMM_MOND_PIDFILE` 2> /dev/null; then

echo " already running."

exit 0

fi

$MMM_MOND_BIN

if [ "$?" -ne 0 ]; then

echo "failed"

exit 1

fi

echo "Ok"

exit 0

;;

stop)

# Stop daemon.

echo -n "Shutting down MMM Monitor daemon: "

if [ -s $MMM_MOND_PIDFILE ]; then

pid="$(cat $MMM_MOND_PIDFILE)"

cnt=0

kill "$pid"

while kill -0 "$pid" 2>/dev/null; do

cnt=`expr "$cnt" + 1`

if [ "$cnt" -gt 15 ]; then

kill -9 "$pid"

break

fi

sleep 2

echo -n "."

done

echo " Ok"

exit 0

fi

echo " not running."

exit 0

;;

status)

echo -n "Checking MMM Monitor process:"

if [ ! -s $MMM_MOND_PIDFILE ]; then

echo " not running."

exit 3

fi

pid="$(cat $MMM_MOND_PIDFILE)"

if ! kill -0 "$pid" 2> /dev/null; then

echo " not running."

exit 1

fi

echo " running."

exit 0

;;

restart|reload)

$0 stop

$0 start

exit $?

;;

*)

echo "Usage: $0 {start|stop|restart|status}"

;;

esac

exit 1

(3)检查MMM启动后的状态

mmm启动成功后,在Monitor上执行mmm_control show和mmm_control checks命令结果如下。

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/ONLINE. Roles: reader(172.16.1.210)

db2(172.16.1.126) master/ONLINE. Roles: reader(172.16.1.211), writer(172.16.1.100)

[root@hdp4~]#

[root@hdp4~]#mmm_control checks

db2 ping [last change: 2018/08/02 08:57:38] OK

db2 mysql [last change: 2018/08/02 08:57:38] OK

db2 rep_threads [last change: 2018/08/02 08:57:38] OK

db2 rep_backlog [last change: 2018/08/02 08:57:38] OK: Backlog is null

db1 ping [last change: 2018/08/02 08:57:38] OK

db1 mysql [last change: 2018/08/02 08:57:38] OK

db1 rep_threads [last change: 2018/08/02 08:57:38] OK

db1 rep_backlog [last change: 2018/08/02 08:57:38] OK: Backlog is null

2. 测试切换

(1)停止DB1上MySQL服务

service mysql stop

查看状态,DB1上的VIP reader(172.16.1.210) 自动迁移到DB2上。

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/HARD_OFFLINE. Roles:

db2(172.16.1.126) master/ONLINE. Roles: reader(172.16.1.210), reader(172.16.1.211), writer(172.16.1.100)

[root@hdp4~]#

(2)启动DB1上MySQL服务

service mysql start

一分钟之后,状态恢复:

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/ONLINE. Roles: reader(172.16.1.210)

db2(172.16.1.126) master/ONLINE. Roles: reader(172.16.1.211), writer(172.16.1.100)

[root@hdp4~]#

(3)停止DB2上MySQL服务

service mysql stop

DB2上负责读的VIP(172.16.1.211)以及负责写的VIP(172.16.1.100)会自动迁移到DB1上。

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/ONLINE. Roles: reader(172.16.1.210), reader(172.16.1.211), writer(172.16.1.100)

db2(172.16.1.126) master/HARD_OFFLINE. Roles:

[root@hdp4~]#

(4)启动DB2上的MySQL服务

service mysql start

一分钟之后,DB1上负责读的VIP(172.16.1.210)自动迁移到DB2上,但是负责写的VIP(172.16.1.100),仍在DB1上。

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/ONLINE. Roles: reader(172.16.1.211), writer(172.16.1.100)

db2(172.16.1.126) master/ONLINE. Roles: reader(172.16.1.210)

[root@hdp4~]#

(5)只读节点上stop slave

在DB2上停止复制:

mysql> stop slave;

查看状态,DB2上的VIP(172.16.1.210)会自动迁移到DB1上。

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/ONLINE. Roles: reader(172.16.1.210), reader(172.16.1.211), writer(172.16.1.100)

db2(172.16.1.126) master/REPLICATION_FAIL. Roles:

[root@hdp4~]#

(6)只读节点上start slave

在DB2上启动复制:

mysql> start slave;

状态恢复:

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/ONLINE. Roles: reader(172.16.1.211), writer(172.16.1.100)

db2(172.16.1.126) master/ONLINE. Roles: reader(172.16.1.210)

[root@hdp4~]#

(7)读写节点上stop slave

在DB1上停止复制:

mysql> stop slave;

查看状态无任何变化。理论上也应该是对现有的环境无任何影响。

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/ONLINE. Roles: reader(172.16.1.211), writer(172.16.1.100)

db2(172.16.1.126) master/ONLINE. Roles: reader(172.16.1.210)

[root@hdp4~]#

(8)停止MMM监控主机上的monitor服务

/etc/init.d/mysql-mmm-monitor stop

VIP都还在之前的节点上:

[root@hdp2~]#ip a | grep ens32

2: ens32:

inet 172.16.1.125/24 brd 172.16.1.255 scope global ens32

inet 172.16.1.100/32 scope global ens32

inet 172.16.1.211/32 scope global ens32

[mysql@hdp3~]$ip a | grep ens32

2: ens32:

inet 172.16.1.126/24 brd 172.16.1.255 scope global ens32

inet 172.16.1.210/32 scope global ens32

(9)启动MMM监控服务

/etc/init.d/mysql-mmm-monitor start

对DB1和DB2上的相关服务无影响。

[root@hdp4~]#mmm_control show

db1(172.16.1.125) master/ONLINE. Roles: reader(172.16.1.211), writer(172.16.1.100)

db2(172.16.1.126) master/ONLINE. Roles: reader(172.16.1.210)

[root@hdp4~]#

(10)查看监控日志

以上的角色切换的过程都在监控日志中记录:

[root@hdp4~]#tail -f /var/log/mysql-mmm/mmm_mond.log

...

2018/08/02 09:07:46 FATAL State of host 'db1' changed from ONLINE to HARD_OFFLINE (ping: OK, mysql: not OK)

2018/08/02 09:10:53 FATAL State of host 'db1' changed from HARD_OFFLINE to AWAITING_RECOVERY

2018/08/02 09:11:54 FATAL State of host 'db1' changed from AWAITING_RECOVERY to ONLINE because of auto_set_online(60 seconds). It was in state AWAITING_RECOVERY for 61 seconds

2018/08/02 09:14:06 FATAL State of host 'db2' changed from ONLINE to HARD_OFFLINE (ping: OK, mysql: not OK)

2018/08/02 09:16:22 FATAL State of host 'db2' changed from HARD_OFFLINE to AWAITING_RECOVERY

2018/08/02 09:17:24 FATAL State of host 'db2' changed from AWAITING_RECOVERY to ONLINE because of auto_set_online(60 seconds). It was in state AWAITING_RECOVERY for 62 seconds

2018/08/02 09:20:02 FATAL State of host 'db2' changed from ONLINE to REPLICATION_FAIL

2018/08/02 09:22:14 FATAL State of host 'db2' changed from REPLICATION_FAIL to ONLINE

参考:

MMM

MySQL高可用架构-MMM环境部署记录

MySQL主主复制+MMM实现高可用

————————————————

版权声明:本文为CSDN博主「wzy0623」的原创文章,遵循 CC 4.0 BY-SA 版权协议,转载请附上原文出处链接及本声明。

原文链接:https://blog.csdn.net/wzy0623/article/details/81359632

About Me

|

........................................................................................................................ ● 本文作者:小麦苗,部分内容整理自网络,若有侵权请联系小麦苗删除 ● 本文在itpub、博客园、CSDN和个人微 信公众号( xiaomaimiaolhr)上有同步更新 ● 本文itpub地址: http://blog.itpub.net/26736162 ● 本文博客园地址: http://www.cnblogs.com/lhrbest ● 本文CSDN地址: https://blog.csdn.net/lihuarongaini ● 本文pdf版、个人简介及小麦苗云盘地址: http://blog.itpub.net/26736162/viewspace-1624453/ ● 数据库笔试面试题库及解答: http://blog.itpub.net/26736162/viewspace-2134706/ ● DBA宝典今日头条号地址: http://www.toutiao.com/c/user/6401772890/#mid=1564638659405826 ........................................................................................................................ ● QQ群号: 230161599 、618766405 ● 微 信群:可加我微 信,我拉大家进群,非诚勿扰 ● 联系我请加QQ好友 ( 646634621 ),注明添加缘由 ● 于 2020-02-01 06:00 ~ 2020-02-31 24:00 在西安完成 ● 最新修改时间:2020-02-01 06:00 ~ 2020-02-31 24:00 ● 文章内容来源于小麦苗的学习笔记,部分整理自网络,若有侵权或不当之处还请谅解 ● 版权所有,欢迎分享本文,转载请保留出处 ........................................................................................................................ ● 小麦苗的微店: https://weidian.com/s/793741433?wfr=c&ifr=shopdetail ● 小麦苗出版的数据库类丛书: http://blog.itpub.net/26736162/viewspace-2142121/ ● 小麦苗OCP、OCM、高可用网络班: http://blog.itpub.net/26736162/viewspace-2148098/ ● 小麦苗腾讯课堂主页: https://lhr.ke.qq.com/ ........................................................................................................................ 使用 微 信客户端扫描下面的二维码来关注小麦苗的微 信公众号( xiaomaimiaolhr)及QQ群(DBA宝典)、添加小麦苗微 信, 学习最实用的数据库技术。

........................................................................................................................ |

|

|